Voice and visual search are reshaping how we find information, each offering unique advantages. Voice search uses spoken input and natural language processing to deliver quick, conversational answers, while visual search lets you use your camera to identify objects and discover related information. Both rely on personalization – leveraging data like location, preferences, and behavior to tailor results.

Key insights:

- Voice Search: Ideal for quick, specific queries (e.g., "Where’s the nearest coffee shop?"). It excels in conversational ease and context-aware responses.

- Visual Search: Perfect for discovery when words fail (e.g., identifying a plant or shopping for a product). It focuses on visual context and style-based suggestions.

- Growth Trends: Visual queries have surged 300% from 2023 to 2025, with tools like Google Lens processing 12 billion searches monthly. Voice search continues to grow by 35% annually.

Both technologies are transforming search personalization, with voice excelling in information access and visual search leading in discovery. Businesses optimizing for both can see up to a 30% increase in digital commerce revenue. The future lies in multimodal search, blending voice and visual inputs for even more tailored experiences.

Personalized Search: The Future of User-Centric SEO

sbb-itb-edfb666

How Voice Search Delivers Personalization

Voice search isn’t just about recognizing spoken words; it’s about understanding what you mean and tailoring results to fit your specific situation. By combining advanced technologies, voice search transforms simple spoken queries into responses that feel personal and relevant.

Natural Language Processing for Personalization

At the core of voice search lies Natural Language Processing (NLP), which does much more than convert speech into text. NLP digs into the intent behind your words, making interactions feel more like a conversation than a series of isolated commands.

For instance, when you ask, “What about tomorrow?” after checking today’s weather, the system intuitively connects the dots. It understands the context and provides a seamless follow-up, rather than treating the question as a standalone search.

Voice assistants like Siri and Google Assistant rely on NLP, along with both built-in and third-party data, to provide responses tailored to your needs. These assistants can handle tasks such as booking a table for dinner or recommending a coffee shop nearby. As Rachael Powell, Senior Technical SEO at Lumar, puts it:

"With voice-activated devices becoming more popular, voice search optimization is only getting more important. People are searching with full phrases and questions, so it’s key to have content that sounds natural and conversational".

This ability to process natural language is what enables voice search to deliver responses that feel highly relevant to each user.

How User Data and Context Shape Results

Voice search platforms work like sophisticated "match and rank" engines, combining your explicit query with additional context to refine results. They use data-driven profiles – mathematical representations of your habits, preferences, and behaviors – to prioritize results that align with what you’re most likely to want.

Contextual factors such as location, time of day, and even the weather play a big role. For example, if you ask, “Where should I eat?” at noon on a rainy Tuesday, the system considers your GPS location, dining history, and the weather conditions to suggest the best nearby options. Richard Yao from IPG Media Lab highlights this approach:

"Contextual information such as location, time of the day, and the weather, coupled with a myriad of personal preferences, will all be taken into account in delivering the most relevant search results".

By blending personal data with real-time contextual signals, voice search platforms ensure their responses feel tailored to the moment.

Voice Search Personalization in Action

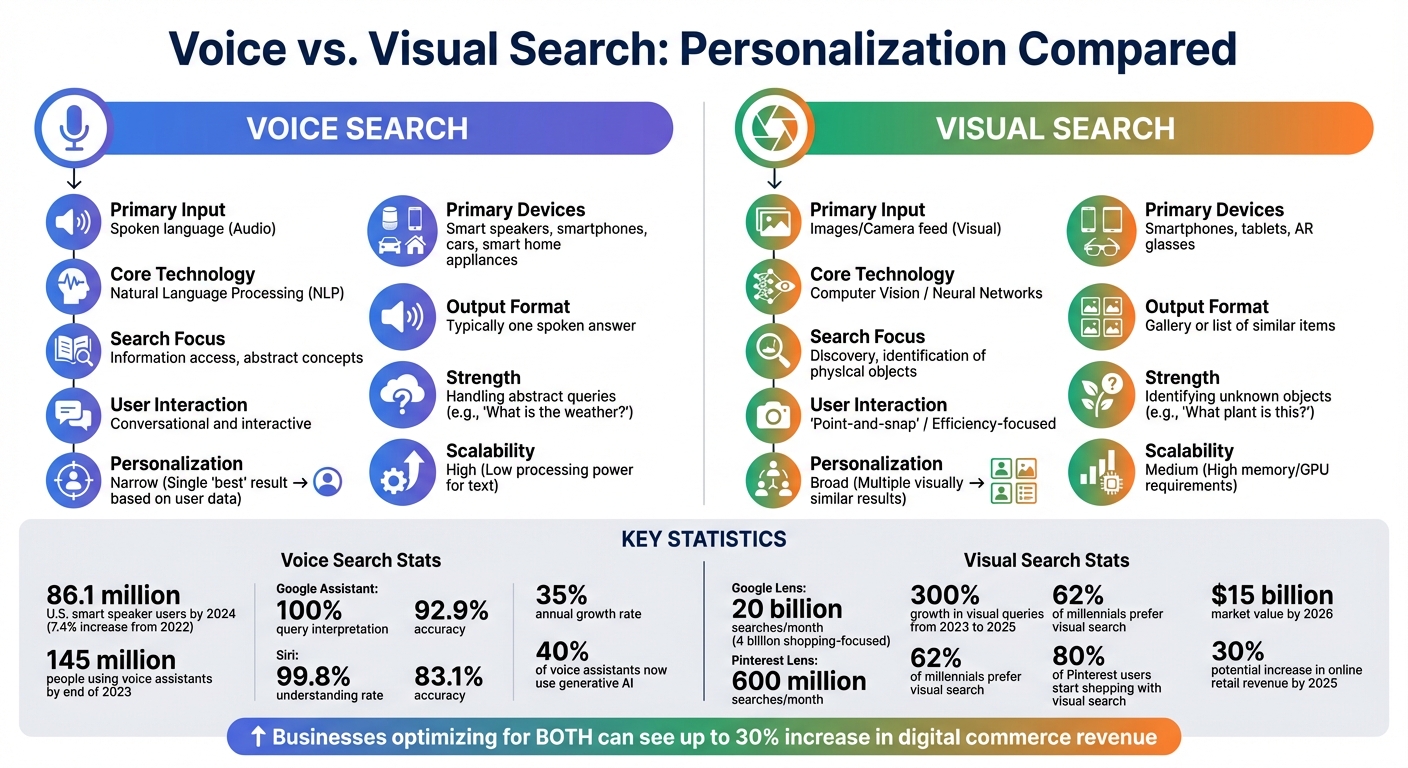

The impact of these advancements is clear in the numbers. By 2024, the number of U.S. smart speaker users grew from 80.2 million in 2022 to 86.1 million. Including smartphones and connected cars, about 145 million people were using voice assistants by the end of 2023.

Performance metrics back up these capabilities. For example, Google Assistant correctly interprets queries 100% of the time and provides accurate answers 92.9% of the time. Similarly, Siri achieves a 99.8% understanding rate with 83.1% accuracy. These systems continually adapt to your speech patterns and habits, making interactions smoother over time.

Looking ahead, the integration of generative AI is taking personalization even further – 40% of voice assistants now use it to refine their responses. Additionally, sentiment analysis is being added to detect your tone and mood. This shift is moving voice search from simply reacting to your questions to predicting your needs, offering help before you even ask.

How Visual Search Delivers Personalization

Visual search is changing the way we find information. Instead of typing a query, you simply point your camera at an object, and AI takes over. It analyzes the image and delivers results that match your preferences and needs. Much like voice search, visual search adapts to your context and personal tastes.

Image Recognition and Object Detection

The backbone of visual search is computer vision, which allows AI to recognize and separate individual objects in an image. When you take a photo with multiple items, the system doesn’t just see a single picture – it identifies and categorizes each object. This means you can search for the exact item that caught your attention.

This process relies on feature vectors, which are mathematical representations of details like color, texture, shape, and patterns. The AI compares these vectors to a vast database of products to find precise matches . For instance, if you snap a photo of a quilted leather jacket, the system doesn’t just search for "jackets." It looks for items with that same quilted texture and leather material.

AI also uses automated tagging to assign metadata to images, identifying details like "distressed fabric" or "rounded collars". This detailed tagging ensures recommendations match your style preferences rather than offering generic options. By interpreting these visual details, the system can turn your photo into highly specific, personalized suggestions.

Using Visual Data for Tailored Results

Visual search doesn’t stop at matching – it also interprets real-time intent. For example, if you photograph a minimalist chair, the system recognizes your preference for clean lines and neutral tones. It then suggests similar items that align with that aesthetic.

The technology even mimics the experience of shopping in a boutique. It offers visually similar products with slight variations, just like a salesperson might show you alternatives that match your taste. On top of that, it factors in your browsing history and location to refine results. If you’ve recently searched for mid-century modern furniture, the system prioritizes similar styles in its recommendations.

As Pinterest CEO Ben Silbermann put it:

"I really believe that the camera will be the next keyboard. It will be a fundamental tool you use to query the world around you".

Visual Search Personalization in Action

The numbers speak for themselves when it comes to visual search’s growing popularity. Google Lens processes 20 billion visual searches per month, with 4 billion focused on shopping. Similarly, Pinterest Lens handles around 600 million searches monthly, with 80% of Pinterest users starting their shopping journey with visual search .

Among millennials, 62% prefer visual search over traditional text-based methods , and 22% of users aged 16–34 have made purchases directly through visual search. The market for visual search technology is also booming, valued at $15 billion by 2026, with predictions that it could increase online retail revenue by 30% by 2025 .

| Platform | Monthly Search Volume | Primary Personalization Focus |

|---|---|---|

| Google Lens | 20 Billion | Real-time object identification and shopping across the web. |

| Pinterest Lens | 600 Million | Lifestyle, fashion, and home decor inspiration and discovery. |

| Amazon StyleSnap | Not Disclosed | Matching uploaded photos to shoppable items in Amazon’s catalog. |

Visual search is advancing quickly. Multimodal search lets you combine images with text, such as uploading a shoe photo and adding "in red" to refine your results . Augmented Reality (AR) features allow you to try on glasses virtually or see how furniture looks in your home before buying. Social media platforms like TikTok and Snapchat are also integrating visual search, enabling users to "snap and shop" directly from their feeds.

These developments show how visual search is moving beyond object identification. It’s becoming a powerful tool for delivering personalized recommendations based on your style, preferences, and behavior. This shift is turning visual search into a key player in creating tailored shopping experiences.

Voice vs. Visual Search: A Direct Comparison

Voice vs Visual Search: Key Differences and Statistics Comparison

Taking a closer look at voice and visual search reveals how these two technologies cater to personalization in very different ways. By understanding their distinctions, you can better determine which approach suits your specific needs.

Input Methods: Voice vs. Visual

Voice search revolves around spoken language. You simply speak your query, and the AI processes it using natural language processing (NLP). This method shines when you know exactly what you’re looking for. For instance, asking "What’s the weather today?" or "Where’s the nearest coffee shop?" delivers a quick, direct answer.

Visual search works differently. Instead of describing something, you use your camera to capture it. This approach is ideal when words fail you. Imagine spotting an unusual plant on a hike – just snap a picture, and the AI, using computer vision, identifies it without needing a name. These differences make voice and visual search complementary tools, each excelling in specific scenarios.

Voice search is great for abstract queries like weather updates or general knowledge, while visual search excels at identifying physical objects in real time. The way each delivers personalized results also varies. Voice search feels conversational, letting you refine your query with follow-up questions. Visual search, on the other hand, is a quick "point-and-snap" experience, prioritizing efficiency. However, emerging technologies are beginning to merge these two methods, offering even greater versatility.

Devices and Ecosystems

The devices and ecosystems that support these technologies further shape their use cases. Voice search has expanded far beyond smartphones, integrating into smart speakers like Amazon Alexa and Google Assistant, as well as connected cars and smart home devices. By 2024, the U.S. had 86.1 million smart speaker users, a 7.4% increase from 2022.

Visual search, meanwhile, depends on devices equipped with cameras, such as smartphones and tablets, and is increasingly being integrated into AR tools and smart glasses. Google Lens alone processes nearly 20 billion visual searches every month, with 20% of those related to shopping. The leaders in this space are clear: Amazon (Alexa), Apple (Siri), and Google (Assistant) dominate voice, while Google Lens, Pinterest Lens, and Samsung’s Bixby Vision lead the visual search landscape.

The future lies in blending these technologies. Tools like GPT-4o and Project Astra are already combining visual and voice inputs, enabling simultaneous image capture and voice queries. This fusion is redefining how searches are conducted and how results are tailored to users.

Comparison Table: Voice vs. Visual Search

Here’s a quick breakdown of the main differences between these two search methods:

| Feature | Voice Search | Visual Search |

|---|---|---|

| Primary Input | Spoken language (Audio) | Images/Camera feed (Visual) |

| Core Technology | Natural Language Processing (NLP) | Computer Vision / Neural Networks |

| Search Focus | Information access, abstract concepts | Discovery, identification of physical objects |

| User Interaction | Conversational and interactive | "Point-and-snap" / Efficiency-focused |

| Personalization | Narrow (Single "best" result based on user data) | Broad (Multiple visually similar results) |

| Primary Devices | Smart speakers, smartphones, cars, smart home appliances | Smartphones, tablets, AR glasses |

| Output Format | Typically one spoken answer | Gallery or list of similar items |

| Strength | Handling abstract queries (e.g., "What is the weather?") | Identifying unknown objects (e.g., "What plant is this?") |

| Scalability | High (Low processing power for text) | Medium (High memory/GPU requirements) |

Choosing the right technology depends on your industry focus. Service-oriented brands like travel or fitness might see better results with voice search, while product-focused brands in areas like fashion or consumer goods should invest in visual search optimization.

How to Optimize for Personalized Search

To stay ahead in personalized search, it’s important to tailor content for both voice and visual search. Brands that adapt their websites to meet the needs of these technologies can see a significant boost in digital commerce revenue – up to 30%.

Voice Search Optimization Techniques

Voice search is all about natural, conversational language. Instead of focusing on short, generic keywords like "best pizza Chicago", aim for phrases that reflect how people actually speak, such as "Where can I find the best pizza near me?" These long-tail keywords align with real user queries.

Adding an FAQ schema to your site can make your content more accessible for voice assistants. Provide concise answers (one to two sentences) so these tools can easily extract and relay the information. Local SEO plays a big role here too – keep your business details, like hours, location, and parking options, up-to-date. Since voice assistants often rely on GPS data to deliver results, this information ensures your business is visible in local searches. And because voice assistants typically highlight only the top result, maintaining a strong domain authority and good relationships with platform owners is crucial.

Now let’s shift gears to the visual side of personalization.

Visual Search Optimization Techniques

For visual search, image quality is key. Use high-resolution images (at least 1,200 pixels, but ideally 2,000+ in WebP format) to ensure clarity and fast loading. WebP images are 30% smaller than JPEGs while retaining quality, making them ideal for this purpose. With Google Lens now handling 12 billion searches per month as of 2026 – a massive jump from 2021 – investing in top-notch visuals is more important than ever.

To enhance discoverability, optimize your image metadata. Use descriptive, keyword-rich file names like "womens-black-leather-moto-jacket.jpg" instead of generic ones like "IMG_1234.jpg." Keep alt text concise (under 125 characters) but descriptive, mentioning details like material, color, and texture. Research shows that 32.5% of Google Lens results align with keywords in the page title, while 11.4% match the alt text to AI-generated labels. Include 7–9 images per product, showing multiple angles and close-ups. Structured data, such as Product and ImageObject, helps search engines better understand your visuals, while dedicated image sitemaps improve the discoverability of images loaded via JavaScript.

But optimizing images and metadata is only part of the equation – your overall content strategy matters too.

Creating Content for Both Search Types

With 62% of Gen Z preferring visual search and half of all online users engaging with voice search daily, it’s clear that catering to both is non-negotiable. Product descriptions should be crafted for voice queries, while images need to be optimized for visual discovery. This dual approach ensures search results align with user intent and context.

Take Nike, for example. Their "Nike Fit" technology uses visual recognition for precise footwear sizing while also offering voice-accessible product details. Shopify, on the other hand, reduced product return rates by 40% by integrating 3D and AR media, helping customers better visualize products before purchasing.

The future of search is multimodal – blending text, image, and voice inputs to create richer experiences. Imagine pointing your camera at an item and asking, "Where can I buy this in blue?". This merging of voice and visual capabilities opens up exciting opportunities to deliver personalized results that adapt to how users interact with technology.

Conclusion: Choosing the Right Search Strategy

Key Takeaways

Voice and visual search are transforming how personalization connects brands with their audiences. Each offers distinct advantages depending on your business focus. Voice search shines in providing quick, conversational answers to specific queries – like business hours, weather updates, or directions – making it a natural fit for service-oriented brands. On the other hand, visual search is a game-changer for discovery. It helps users identify items they might struggle to describe, which is especially useful for product-driven industries like fashion and home decor. With billions of queries each month on Google Lens and growing popularity among Gen Z shoppers, visual search is becoming essential for reaching younger audiences and driving product discovery.

The best approach? Combine both. Brands that adapt their websites for voice and visual search can see up to a 30% increase in digital commerce revenue. Service brands should focus on optimizing for voice with natural language content and local SEO. Meanwhile, product brands can gain an edge with high-quality visuals and structured data. Many businesses find a hybrid strategy brings the best of both worlds, blending the strengths of these technologies to meet diverse user needs. As search technology evolves, the potential for even greater personalization is on the horizon.

What’s Next for Search and Personalization

The future of search is moving beyond voice or visual – it’s becoming multimodal. AI is enabling users to combine different inputs, like snapping a photo of an item and asking, "Where can I buy this in blue?". Myriam Jessier, Marketing Consultant at Neurospicy Agency, highlights this shift:

"A rise in multimodal search means image optimization is going to be prevalent [in 2025]".

AI is driving search from a mobile-first world to one where audio, visuals, and text work together seamlessly. New technologies, like gaze tracking and focus analysis, are already predicting user intent with incredible accuracy. For businesses, this means personalization will become even more advanced. Success will depend on creating content that effortlessly integrates across multiple input methods, delivering results tailored to how users naturally interact with technology. The search landscape is evolving rapidly, and staying ahead means embracing these cutting-edge tools to meet users where they are.

FAQs

Which is better for my business: voice or visual search?

Choosing between voice search and visual search comes down to your business goals and how your audience engages with your products or services.

- Voice search works best for quick, hands-free tasks, making it a natural fit for industries centered on convenience and instant answers.

- Visual search, on the other hand, shines when customers are looking for something specific based on images – perfect for sectors like retail or fashion.

To appeal to a wider range of preferences, integrating both technologies can provide a more tailored and engaging experience for your customers.

What data is used to personalize voice and visual search results?

Voice search adapts to users by analyzing spoken inputs, location, time, and personal preferences to provide results that feel customized. On the other hand, visual search focuses on image data – like visual features and metadata – combined with user behavior and interaction history. Both methods rely on real-time data and contextual clues to improve accuracy and relevance, offering a more personalized search experience.

How do I measure ROI from voice and visual search optimization?

To gauge ROI effectively, focus on tracking essential metrics tailored to voice and visual search. For voice search, keep an eye on organic traffic driven by voice queries, performance in featured snippet rankings, and visibility in local search results. For visual search, monitor traffic growth from tools like Google Lens and track conversions from users engaging with visual search.

Additionally, analyze changes in conversion rates, average order value, and engagement metrics. These data points will help you measure the impact on revenue and ensure your efforts align with your business objectives.